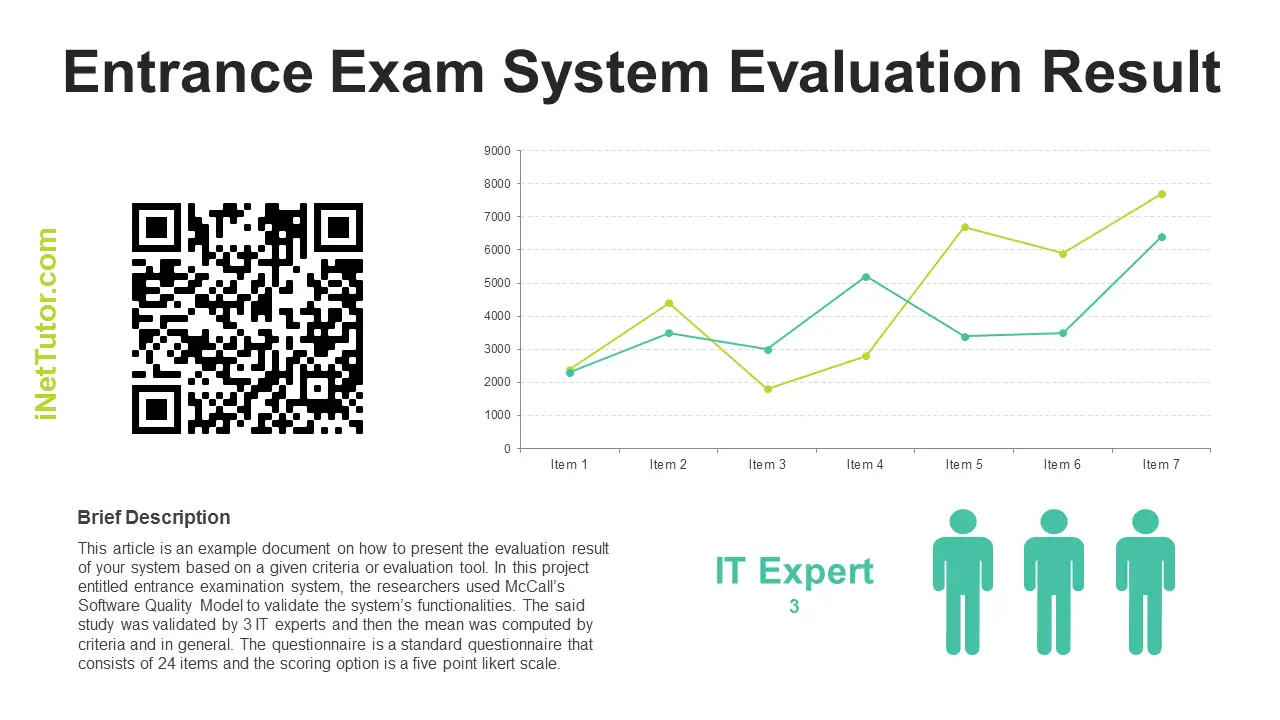

Entrance Exam System Evaluation Result

This article is an example document on how to present the evaluation result of your system based on a given criteria or evaluation tool. In this project entitled entrance examination system, the researchers used McCall’s Software Quality Model to validate the system’s functionalities. The said study was validated by 3 IT experts and then the mean was computed by criteria and in general. The questionnaire is a standard questionnaire that consists of 24 items and the scoring option is a five point likert scale.

SYSTEM EVALUATION

This section presents the system evaluation using the McCall’s Software Quality Model which was used to test the developed software which is the Entrance Examination System.

Below are the tabulated results for the system evaluation.

Table 1.0

Experts’ Evaluation of the entrance exam system based on the Criteria of Software Quality Standards set forth by McCall

|

Criteria |

Experts | Mean | Interpretation | ||

| 1 | 2 | 3 | |||

| 1. Auditability | 5 | 2 | 2 | 3.0 | Average |

| 2. Accuracy | 4 | 3 | 2 | 3.0 | Average |

| 3. Completeness | 4 | 3 | 3 | 3.3 | Average |

| 4. Communication Commonality | 5 | 3 | 3 | 3.7 | Good |

| 5. Conciseness | 5 | 4 | 3 | 4.0 | Good |

| 6. Consistency | 5 | 2 | 2 | 3.0 | Average |

| 7. Observability | 5 | 3 | 3 | 3.7 | Good |

| 8. Operability | 5 | 3 | 4 | 4.0 | Good |

| 9. Security | 5 | 2 | 2 | 3.0 | Average |

| 10. Documentation | 5 | 3 | 3 | 3.7 | Good |

| 11. Simplicity | 5 | 4 | 4 | 4.3 | Good |

| 12. Software System Independence | 5 | 2 | 1 | 2.7 | Average |

| 13. Traceability | 5 | 2 | 3 | 3.3 | Average |

| 14. Training | 5 | 3 | 4 | 4.0 | Good |

| 15. Controllability | 5 | 2 | 3 | 3.3 | Average |

| 16. Data Commonality | 5 | 2 | 2 | 3.0 | Average |

| 17. Decomposability | 5 | 1 | 3 | 3.0 | Average |

| 18. Error Tolerance | 4 | 1 | 2 | 2.3 | Fair |

| 19. Execution Efficiency | 5 | 3 | 3 | 3.7 | Good |

| 20. Expandability | 5 | 3 | 2 | 3.3 | Average |

| 21. Generality | 5 | 3 | 3 | 3.7 | Good |

| 22. Hardware Independence | 5 | 2 | 1 | 2.7 | Average |

| 23. Instrumentation | 5 | 3 | 3 | 3.7 | Good |

| 24. Modularity | 5 | 2 | 3 | 3.3 | Average |

| TOTAL MEAN | 3.4 | Average | |||

Table 1.0 showed that the developed software in terms of auditability which measured the ease with which conformance to standards can be checked, experts rated it as 3 which is interpreted as average. In terms of accuracy that referred to the precision of computations and control, the evaluators rated 3 which means average.

The completeness of the system or the degree to which full implementation of the required functions has been achieved got a mean of 3.3 interpreted as average.

In line with communication commonality or the degree to which standards interfaces and protocols are understood, the system was rated as 3.7 which means good. For the conciseness of the system or the compactness of the program in terms of lines of code, the three evaluators rated it as 4 interpreted as good. The system’s consistency or the use of uniform design and documentation techniques throughout the software development project got a mean of 3 or average.

Moreover, on the Observability or the process of streaming the software components that can be easily identified and understand, evaluators rated the developed software as 3.7 interpreted as good. In line with the system’s security or the availability of the system’s mechanisms that control or protect the programs and data, the developed system got a mean of 3 or average. Whereas in the system’s self-documentation, a mean of 3.7 was derived and interpreted as good.

With the degree to which the program can be understood without difficulty or the simplicity of the software, got 4.3 as the derived mean and interpreted as good. On the software system independence or the degree to which the program is independent of non-standard programming language features, operating system characteristics, and other environmental constraints, the evaluators rated it with the mean of 2.7 or average. In line with the ability to trace a design representation or actual program component back to requirements or the traceability of the system, it was evaluated by the evaluators 3.3 or average.

In terms of training or the degree to which the software assists in enabling new users to apply the system, the software gained a rating of 4 which means good. For the controllability or where the system can be easily controlled and manipulated in terms of execution, program structure and design, the evaluators graded it as 3.3 or average. The data commonality of the system or the use of standard data structures and types throughout the program got a mean of 3 or average.

However, in terms with decomposability or if the software is built from series of modules, and can be tested independently, the developed software got a rating of 3 which was interpreted as average. In terms with the error tolerance of the system, or the damage that occurs when the program encounters an error, the evaluators rated the developed software as 2.3 or fair. On the run-time performance of the program or the execution efficiency of the software, the developed software got a rating of 3.7 and interpreted as good. For the degree to which architectural, data, or procedural design can be extended or the expandability of the system, the developed software was rated as 3.3 which means average.

For the generality of the system or the breadth of potential application of the program components, the three evaluators rated it as 3.7 which means good. On the degree to which the software is decoupled from the hardware on which it operates or the hardware independence of the system, the CAEE system was graded as 2.7 or average. In terms with the instrumentation of the system or the degree to which the program monitors its own operation and identifies errors that do occur, evaluators gave a rating of 3.7 as a mean was derived which was interpreted as good. And for the modularity of the system or the functional independence of program components, the software was graded as 3.3 which means average.

Based from the results of the system evaluation, the developed software which is the Entrance Examination System got a total mean of 3.4 which means average.

Credits to the authors of the project.

You may visit our facebook page for more information, inquiries and comments.

Hire our team to do the project.